AI as a Design Medium

While the first article in this series on artificial intelligence (AI) explored how designers may be uniquely positioned to confront the technology’s implications, this second essay examines AI as a design medium—one that can reshape how designers think and produce knowledge.

Eric Rodenbeck, a lecturer in architecture at the Harvard Graduate School of Design and founder of Stamen Design, argues that AI is most generative when treated not as a system for producing answers, but as a condition for inquiry. Challenging prevailing narratives of efficiency and automation, he proposes a different trajectory: AI as a material to be tested, misused, and interrogated. Here, prompts become sketches, outputs become sites of critique, and design becomes a practice of engaging with complexity rather than resolving it.

For the past two years I have been teaching a course at the Harvard Graduate School of Design (GSD) called “Re-imagining the Archive.” The premise is simple: take collections that are supposed to stabilize knowledge and treat them instead as something you can work on, work through, and sometimes work against.

We have been inside the archives of the Museum of Modern Art (MoMA), the Harvard Art Museums, the Institute of Black Imagination, and the American Museum of Natural History. Our goal is not to visualize these archives cleanly, nor to make them legible faster, but rather to see what happens when you stay with them long enough that their seams start to show, when the gaps emerge as new sources of meaning. We do not treat data visualization as a neutral exercise in creating and communicating understanding. My students and I are pursuing, evaluating, and critiquing rhetorical and aesthetic gestures in the pursuit of knowledge creation through these archives.

At the same time, the ground has been shifting. Large language models (LLMs) and related systems have moved from curiosity to constant presence. Every day there is another invitation in my inbox: come talk about prompts, come talk about the future, come talk about what this replaces. The academy seems to be obsessed with these new tools, which are moving so fast we can barely keep up (if we do at all).

Recently, at the GSD, Edward Eigen gave a talk where he compared LLMs to the Talmud—not because they are sacred, but because they are dense, generative, and endlessly interpretable. You do not read them once; you return to them, argue with them, annotate them, build traditions around them. That framing stuck with me because most of what I hear about artificial intelligence (AI) still sounds like management consulting. Efficiency. Acceleration. Staff reduction. Faster pipelines. Better throughput. That is not how AI behaves in the studio. There, AI is much closer to ink. We treat it, and the data it shuffles, as material.

Prompts as sketches, models as material

If you treat AI as a tool, you ask: how do I get the right answer? If you treat it as a medium, you ask: what happens if I push this?

The difference is immediate. A prompt stops being an instruction that returns a correct (or not) answer and starts being a sketch—something provisional, something you can revise, distort, overwork. The point is not to nail it on the first try. You are trying to see what these models do under pressure.

In my class, the first principle is simple: Do not take what comes back from prompts at face value. Interrogate it. Iterate on it. Stay with it longer than feels efficient.

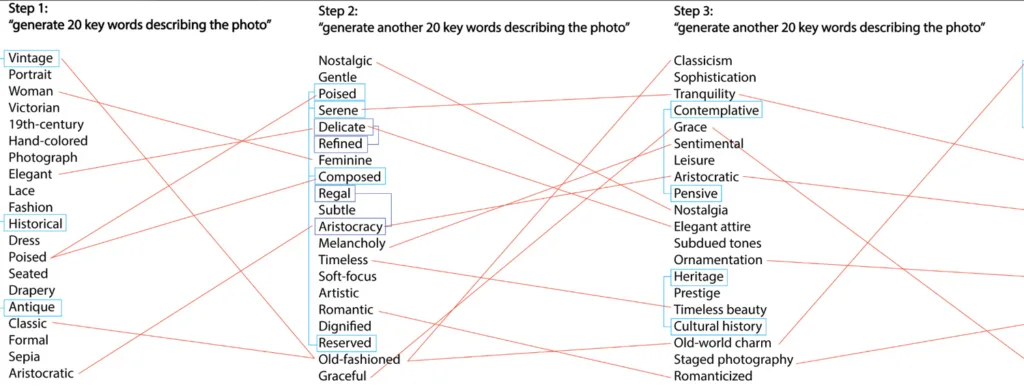

One of the clearest examples of this came from Roy Zhang, a student in the Master of Design Studies (MDes) program who graduated in 2025, who asked what sounds like a trivial question: what happens if you ask the same thing over and over again?

He took a single image from the collection at Harvard’s Houghton Library and asked ChatGPT to generate twenty keywords describing it. Then he did it again. And again. Twenty times using the same prompt. He compared the returned lists of keywords with each successive list and found something fascinating.

What he found is something you can feel once you see it: the system settles. Early responses are wide, exploratory, a little strange. Over time, they collapse toward a narrower, more conventional description. Variability decreases. Language standardizes. The model becomes more confident and less interesting.

If you are using AI for efficiency, that is a feature. If you are using it as a design medium, that is a problem—and an opening.

Roy’s project turns prompting into something you can analyze, not just perform. It shows that AI does not just produce outputs; it produces trajectories. And those trajectories have shape, drift, bias toward the familiar. That is material. We are spinning up whole systems, questioned and investigated and visualized.

Fluency on tap

A second principle in the course: You can now build entire projects through prompts. That does not mean you understand them. My students are using AI to write programs that scrape data from the internet, generate information, propose interfaces, draft code, structure arguments. You can get very far, very fast. That speed is real. But it also creates a new kind of risk and opportunity: fluency without understanding.

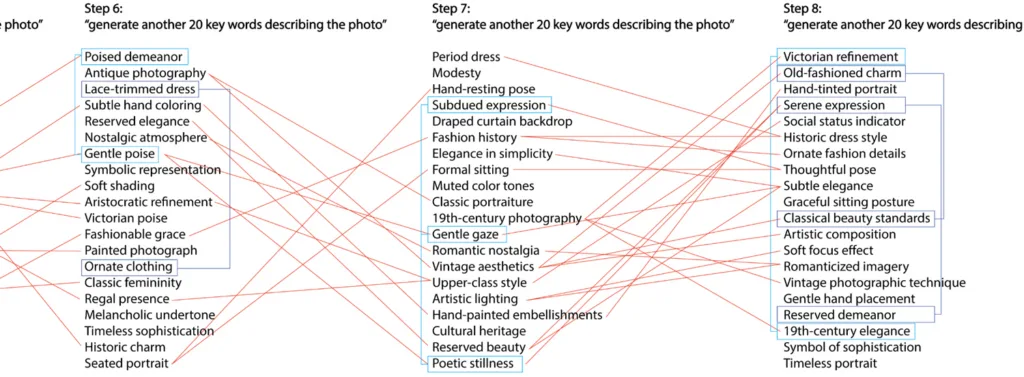

Kevin Tang and Yuanqing Xie, MDes students who graduated in 2025, built a project for the Institute of Black Imagination that embodies this tension.

Working with Dario Calmese’s podcast archive at the Institute of Black Imagination, Kevin and Yuanqing used AI to break conversations into individual sentences, analyze each for semantic content, and then rebuild the archive as an alternate audio player. Instead of browsing by episode, you browse by idea. Themes surface across conversations. A sentence spoken in one context finds resonance in another.

This is not just a clever interface; it is a re-indexing of the archive. And it only works because they did not stop at “the model can summarize this.” They pushed further. What happens if you atomize speech? What happens if you treat meaning as something that can be sliced, clustered, and recomposed? What gets lost? What gets amplified? At one point they had AI generating interstitial sentences in between each of the sentences from the podcasts, and it sounded like someone trying way too hard to sound cool by mediating in between.

The result is a system that feels less like a player and more like a landscape. You move through it by association, not sequence. Again, the important move is not the use of AI. It is the decision to treat its output as something to work on rather than accept.

Showing your work (all of it)

A third principle: If you use AI, you show your prompts—not as a footnote, but as part of the work. What did you ask for? What did you get? What did you think of it? What did you ask next? Framing the inquiry this way accomplishes a few things.

It slows the work down, in a good way. It makes process visible—not just the polished artifact, but the chain of decisions behind it. And it restores a kind of authorship that AI tends to obscure. These systems produce artifacts that feel oddly anonymous, even when they are highly specific. Showing the prompts reveals the dialogue, the back-and-forth, the moments where something clicked or did not.

It also creates a space for critique. If a system produces something biased, flattened, or falsely authoritative, we can ask: where did that come from? The model? The data? The prompt? The desire? Usually, it’s all of them at once.

Misuse as method

The most interesting projects in the course almost always slightly misuse the technology. Cindy Coffee and Ieva Lygnugaryte’s work with MoMA’s collection is a good example. These MDes students, who will graduate in 2026, took photographs where both the authors and the subjects are unknown—images that sit in the archive with minimal context—and used AI to animate the subjects exiting the frame.

It is a simple gesture, but it lands hard.

The archive is built on containment. Objects are cataloged, stabilized, held in place. These images, already unstable in their attribution, become even less fixed. The subjects leave. The frame fails to hold them. What you are left with is not a better description of the image, but a different relationship to it, a sense that the archive is not complete, that it cannot fully account for what it holds. Technically, the project is straightforward (which is sort of amazing at this point). Conceptually, it is doing something more difficult: using AI not to fill gaps, but to widen them in a controlled way.

That is a design move.

AI as something you work through

Across these projects, a pattern emerges. AI is not used to finalize work. It is used to generate situations.

Roy’s repetitions produce a field you can analyze. Yuanqing and Kevin’s system produces a space you can navigate. Cindy and Ieva’s animations produce a rupture you can sit with.

In each case, the output is not the answer, but the start of another question. This is why the language of “tool” falls short. Tools are supposed to be predictable. They extend your intention cleanly into the world. AI, used this way, does not do that. It introduces noise. It reflects back assumptions you did not know you had. It collapses difference. It invents things. It stabilizes too quickly, then surprises you somewhere else. Those are not bugs to eliminate. They are properties to work with.

Designers already know how to do this. We know how to work with materials that resist us. Ink bleeds. Code breaks. Archives contradict themselves. The work is not to force compliance, but to develop a practice that can learn from what happens.

Staying with it

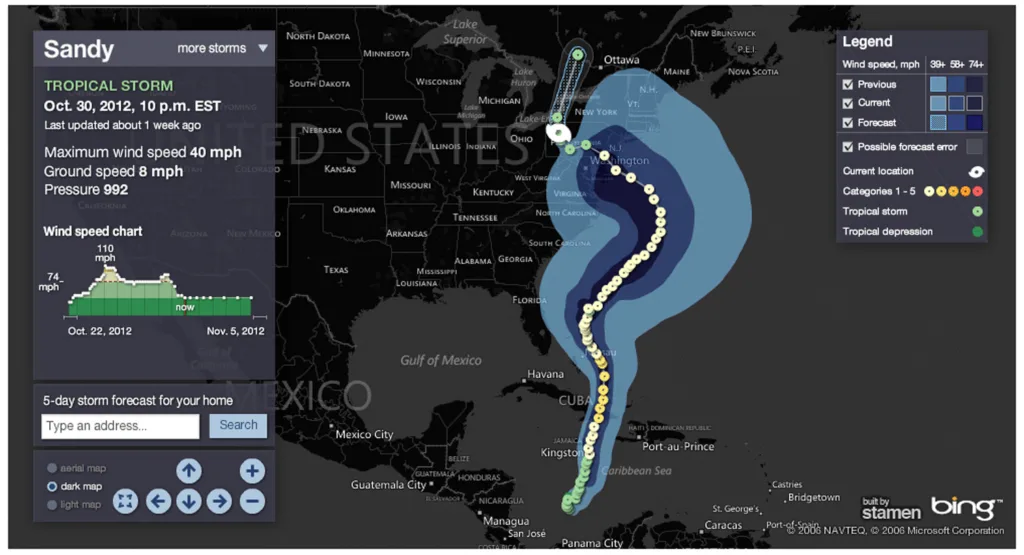

When Google Maps launched in 2005, I had just been in business at Stamen designing maps for a few years. My first, terrified thought was: “That’s it. We’re done; no humans will be involved in the design of maps moving forward.” The opposite turned out to be true; a golden age of web mapping was, from my point of view, partially enabled by Google, making maps so easy to deploy that everyone wanted their own. I think we are seeing something similar with the rise of AI in the design space.

What I worry about most right now is not that AI will replace designers. It is that we will approach AI with too little ambition. If we treat it only as a faster way to do what we already know how to do, we get more of the same—faster maps, faster interfaces, faster summaries.

If we treat AI as a medium, we get something else. We get new ways of seeing collections, new forms of spatial and visual knowledge, new kinds of interfaces that do not just present information but expose its structure, its gaps, its biases. We also get new responsibilities: to be more critical, more explicit about process, more willing to show uncertainty and harness it as part of the doing of the work.

In the studio, this feels less like a revolution and more like a continuation, yet another material showing up on the table, just as disruptive as all the others—volatile, powerful, uneven, full of possibility. Something you do not just use. Something you work through.